High Volume Data Ingestion Best Practices for Telcos

Key takeaways

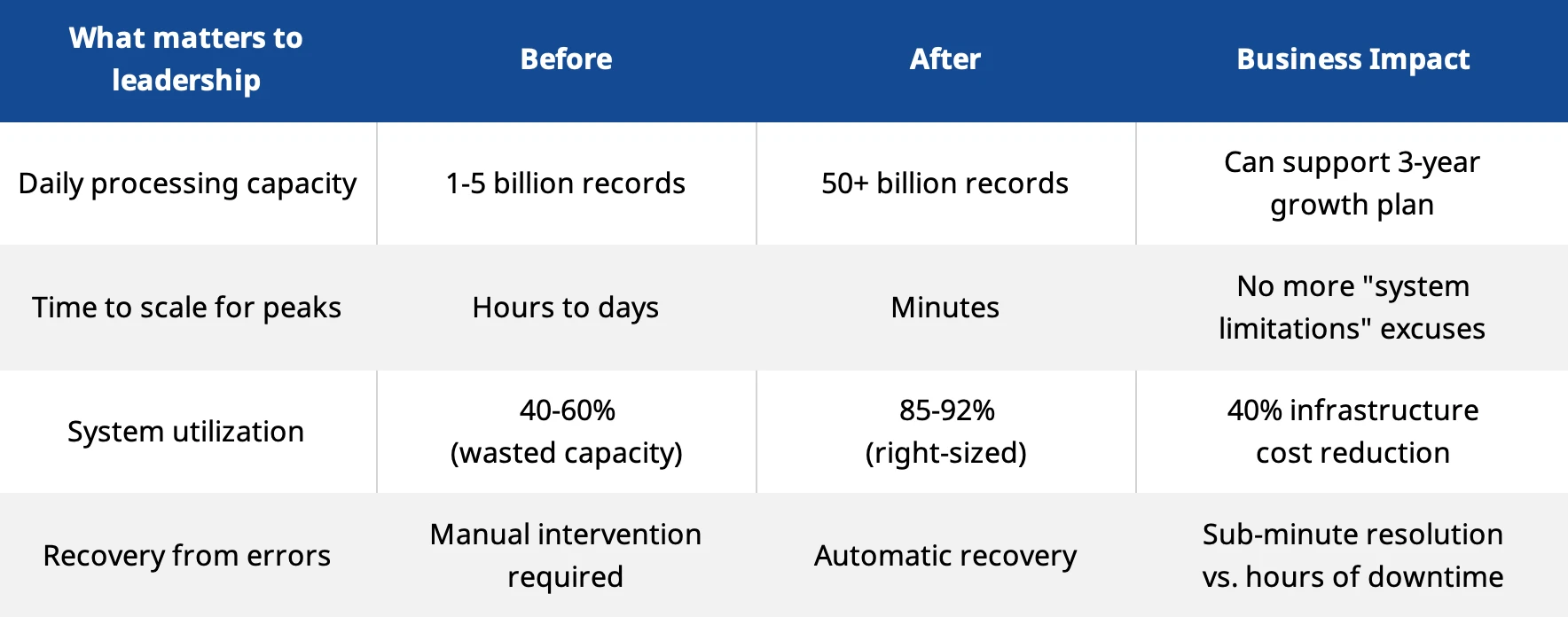

Processing capacity scales to 114 billion records per day through modern architecture that eliminates bottlenecks and prevents revenue-impacting delays

Infrastructure costs drop by 40% when teams stop over-provisioning and start scaling intelligently based on actual demand

Data quality reaches 99.97% accuracy through automated validation that catches errors before they become billing disputes or revenue leakage

Zero-downtime migrations happen when implementation teams use parallel processing strategies instead of risky "big bang" cutovers

Here's a projection that should keep CTOs and IT leaders awake at night: By 2030, global data traffic will surge to an unprecedented 5,016 exabytes monthly .

That's more than 12 times today's levels—equivalent to processing roughly 1,900 terabytes per second on average. For telcos managing this tsunami of data, one ingestion bottleneck—just one—can cost millions in lost revenue or trigger a cascade of customer complaints.

Many telecommunications operators are set to face this challenge with systems built for yesterday's volumes. And if they don’t adapt, the consequences will go beyond processing delays.

Imagine:

Technical debt piling up

Manual workarounds becoming permanent

Every “temporary” fix calcifying into critical infrastructure—all while data volumes double, and then double again.

Facing these challenges requires more than scaling servers or patching legacy systems. This post reveals proven best practices for high-volume data ingestion in telecommunications, showing how operators can handle massive data growth efficiently, maintain accuracy and protect revenue.

Why most IT leaders underestimate the data challenge

The conversation usually starts in a budget meeting. Someone asks: "Can our systems handle 5x growth?" The IT team says yes—and they’re not wrong. Technically, the servers can ingest the data. But raw capacity doesn’t paint the full picture.

The real questions hit later:

Can billing teams reconcile transactions when processing lags 6 hours behind real-time?

What happens when fraud detection runs on yesterday's data instead of this minute's data?

Who explains to the CFO why revenue recognition is delayed by 3 business days?

How does the executive team launch dynamic pricing when systems update rates overnight?

These aren't IT problems. They're business problems that manifest as IT constraints.

Three questions that reveal system readiness

Can the organization handle growth without emergency spending? Current architecture should absorb 5x growth without major infrastructure overhauls. New data sources should integrate in under four weeks, not four months. Otherwise, every business initiative becomes an IT negotiation.

Does revenue recognition keep pace with customer expectations? Dynamic pricing requires real-time processing. So do fraud detection and partner revenue sharing. When systems lag, money leaks. It may not happen dramatically—just 2% here, 3% there. But at volume, it adds up fast.

Do IT teams spend time building or maintaining? When staff training takes over four months, that signals architectural problems. When troubleshooting requires vendor-specific expertise for every component, that signals integration problems. When system changes require cross-team coordination meetings, that signals organizational problems hiding in technical constraints.

How one African operator cleared 500,000 files in four weeks

A major African telecommunications operator faced a choice every IT leader recognizes: spend millions upgrading or spend months suffering. They had 500,000 mediation files backed up. Processing was drowning. Revenue recognition was delayed. Billing disputes were climbing.

Their legacy system was designed for different times. Monolithic architecture. Manual processes. Vendor-specific expertise required for every change. Sound familiar?

CSG's team implemented a different approach:

Parallel processing instead of sequential bottlenecks

Intelligent load balancing instead of hoping for the best

Automated memory management instead of manual tuning

Horizontal scaling instead of vertical limits

The results tell the story better than the technical details:

The backlog cleared in under four weeks. Performance improved 340%. Service never interrupted during migration. No "big bang" cutover drama. No weekend war rooms.

That's what good architecture delivers: business outcomes, not technical specifications.

Best practice #1: Stop building monoliths that turn into money pits

Every IT leader has inherited at least one monolith. That critical system no one wants to touch. The one that requires three specific people to troubleshoot. The one where simple changes take six months because everything connects to everything else.

Here's the uncomfortable truth: monolithic systems fail at telecommunications scale. Not dramatically. Gradually. They slow down, become expensive to change and limit business agility. Eventually, they can turn into career-limiting problems for the leaders responsible for them.

Modern architecture decomposes processing into specialized services:

Data collection services handle network elements independently

Validation services catch errors before they become billing disasters

Routing services direct data based on business rules, not hard-coded logic

Analytics services generate insights without slowing down operations

The business benefits show up in budget meetings:

Infrastructure scales based on actual demand, not worst-case guesses

New services launch in weeks, not quarters

System failures don't cascade across the entire operation

Staffing needs shrink because systems require operations, not constant firefighting

The mistake that kills most modernization efforts

There’s one mistake that quietly kills most modernization efforts: over-decomposition. Teams break everything into tiny services, then drown in integration overhead. Network chatter overwhelms the performance gains. The cure becomes worse than the disease.

The sweet spot sits at 8-15 services for telecommunications data processing. Enough specialization for independent scaling. Not so much fragmentation that coordination becomes impossible.

Think about it from a management perspective: Can a mid-level engineer understand how data flows through the system? Can they troubleshoot problems without escalating to architects? Can they implement changes without cross-team dependencies?

If the answer is no, the architecture is too complex.

Best practice #2: Make data quality someone's job, not everyone's problem

Revenue leakage from data quality issues costs operators measurable percentages of gross revenue annually. However, what makes IT leaders frustrated is that nobody owns the problem.

Billing teams blame the data. Network teams blame the systems. IT teams blame the integrations. Everyone has a theory, and nobody has accountability.

Automated validation changes this dynamic. It catches problems at processing speed, not during monthly reconciliation. It prevents errors instead of apologizing for them. It turns data quality from a finger-pointing exercise into a managed business process.

What automated validation actually prevents

Format problems that create billing disputes:

Network elements send data in varying formats

Timestamps drift across distributed systems

Partner integrations break when external systems change

Calculation errors that leak revenue:

Rating rules get misapplied during high-volume processing

Business logic fails under stress

Manual corrections introduce new problems

Integration failures that delay recognition:

External system changes break connections silently

Equipment malfunctions corrupt data streams

Network failures create gaps in transaction records

CSG's platform processes over 41 trillion events annually with 99.97% first-pass accuracy. Error resolution averages four minutes for automated corrections. That's not a technical achievement—that's preventing millions in revenue losses annually while maintaining regulatory compliance.

The management advantage

When data quality becomes automated, IT leaders gain something valuable: predictability. This is what makes processing times consistent, error rates manageable and revenue recognition reliable. It shifts budget conversations from explaining problems to discussing opportunities.

More importantly, it lets the staff focus on what matters. Teams can stop firefighting and start improving. They get to build new capabilities instead of patching old problems. They directly support business growth instead of constantly explaining technical limitations.

Best practice #3: Build systems that survive crises

The African operator backlog crisis tells the story every IT leader dreads. A major telecommunications operator serving 10+ million subscribers faced:

Nearly 500,000 backlogged mediation files mounting daily

Revenue assurance insights locked in aging, unprocessed data

Thousands of new files arriving each day, compounding the crisis

Imminent risk that files would age beyond processing value

Critical business intelligence frozen while operations waited

All simultaneously. With revenue visibility degrading daily.

Traditional architecture would have required months of manual intervention. Executive escalations. Consultant armies. Extended timelines with no guarantees.

Instead: Backlog cleared in under four weeks. Zero revenue leakage. System now processes 3x daily volume.

What made the difference

Automated load balancing distributed files evenly across multiple processing portals without manual routing decisions. No bottlenecks. No queue management meetings. Just intelligent distribution built into the platform.

Horizontal scaling expanded processing capacity to 32 parallel threads concurrently as the backlog demanded. No hardware procurement delays. No vendor negotiations. Just containers scaling automatically to meet demand.

Intelligent threshold monitoring detected queue buildup and triggered intervention to maintain optimal processing ranges. No performance degradation. No system crashes. Just proactive management embedded in the architecture.

Memory optimization through Python generators maintained peak performance while keeping resource usage low across 50 billion records daily processing capacity.

This is what modern mediation architecture delivers: operational resilience that clears catastrophic backlogs while maintaining daily operations without executive intervention.

Best practice #4: Plan for 6G while running 5G

Here's the conversation that happens in every telecommunications boardroom: The CEO asks when the company can launch that new AI-powered service the competition just announced.

The CTO explains it would require a complete system overhaul. The CFO asks why they're just hearing about this now. The CTO mentions they've been saying the systems need upgrading for two years.

Sound familiar?

This isn't a technology problem—it's a planning problem disguised as a technical constraint. The uncomfortable truth facing IT leaders is simple: decisions made today determine what's possible in 2030. Not just technically possible. Business possible.

Here’s what’s (likely) on the horizon:

Network slicing will create new data types, and edge computing distributes processing—can your current architecture handle distributed coordination?

As processing volumes will increase, AI models must process data at ingestion speed—can current systems integrate AI without separate platforms?

New technologies like holographic communications and autonomous systems with ultra-low latency requirements are on the way— does your current architecture enable these capabilities or prevent them?

It’s important to keep in mind that migration to modern architecture takes roughly two years for large operators. Add several months for planning and procurement. That means decisions made in 2025 determine capabilities in 2027.

Organizations that wait until systems fail? They're not making strategic choices. They're making emergency decisions under pressure with limited options and executive scrutiny.

What this means for IT leaders

The telecommunications data challenge isn't just a technical issue. It's strategic. The question is whether systems that ingest data will enable business growth or limit it.

Modern architecture delivers:

Operational predictability through automation that prevents crises

Business agility through systems that change in weeks, not months

Cost efficiency through right-sized infrastructure that scales with demand

Revenue protection through quality that prevents leakage automatically

Competitive advantage through capabilities that enable new business models

The best practices outlined here provide a proven methodology for building data processing capabilities that scale with changes in the telecommunications industry , while delivering immediate business value through improved operational efficiency and revenue enhancement.

Ready to implement these best practices? CSG's data ingestion platform processes 114 billion records daily while reducing infrastructure costs and eliminating revenue leakage through real-time validation and fraud detection.

Our platform demonstrates these best practices in production environments processing over 41 trillion events annually for leading global telecommunications operators. The proven performance includes 99.9% uptime, sub-millisecond processing latency and audit trails that meet regulatory compliance requirements across multiple jurisdictions.

Connect with our team to learn how to modernize technology and maximize competitive positioning today and into the future.

Related Resources

Master 5G Security with Zero Trust: 7 Best Practices for CSPs

The Zero Trust Security Advantage for CSPs: A Foundation for a Secure Digital Ecosystem